url stringlengths 61 61 | repository_url stringclasses 1 value | labels_url stringlengths 75 75 | comments_url stringlengths 70 70 | events_url stringlengths 68 68 | html_url stringlengths 49 51 | id int64 1.08B 1.73B | node_id stringlengths 18 19 | number int64 3.45k 5.9k | title stringlengths 1 290 | user dict | labels list | state stringclasses 2 values | locked bool 1 class | assignee dict | assignees list | milestone dict | comments list | created_at timestamp[s] | updated_at timestamp[s] | closed_at timestamp[s] | author_association stringclasses 3 values | active_lock_reason null | draft bool 2 classes | pull_request dict | body stringlengths 2 36.2k ⌀ | reactions dict | timeline_url stringlengths 70 70 | performed_via_github_app null | state_reason stringclasses 3 values | is_pull_request bool 2 classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/5484 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5484/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5484/comments | https://api.github.com/repos/huggingface/datasets/issues/5484/events | https://github.com/huggingface/datasets/pull/5484 | 1,562,877,070 | PR_kwDODunzps5I1oaq | 5,484 | Update docs for `nyu_depth_v2` dataset | {

"login": "awsaf49",

"id": 36858976,

"node_id": "MDQ6VXNlcjM2ODU4OTc2",

"avatar_url": "https://avatars.githubusercontent.com/u/36858976?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/awsaf49",

"html_url": "https://github.com/awsaf49",

"followers_url": "https://api.github.com/users/awsaf49/followers",

"following_url": "https://api.github.com/users/awsaf49/following{/other_user}",

"gists_url": "https://api.github.com/users/awsaf49/gists{/gist_id}",

"starred_url": "https://api.github.com/users/awsaf49/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/awsaf49/subscriptions",

"organizations_url": "https://api.github.com/users/awsaf49/orgs",

"repos_url": "https://api.github.com/users/awsaf49/repos",

"events_url": "https://api.github.com/users/awsaf49/events{/privacy}",

"received_events_url": "https://api.github.com/users/awsaf49/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"I think I need to create another PR on https://huggingface.co/datasets/huggingface/documentation-images/tree/main/datasets for hosting the images there?",

"_The documentation is not available anymore as the PR was closed or merged._",

"Thanks for the update @awsaf49 !",

"> Thanks a lot for the updates!\r\n> ... | 2023-01-30T17:37:08 | 2023-03-23T10:41:12 | 2023-02-05T14:15:04 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5484",

"html_url": "https://github.com/huggingface/datasets/pull/5484",

"diff_url": "https://github.com/huggingface/datasets/pull/5484.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5484.patch",

"merged_at": "2023-02-05T14:15:04"

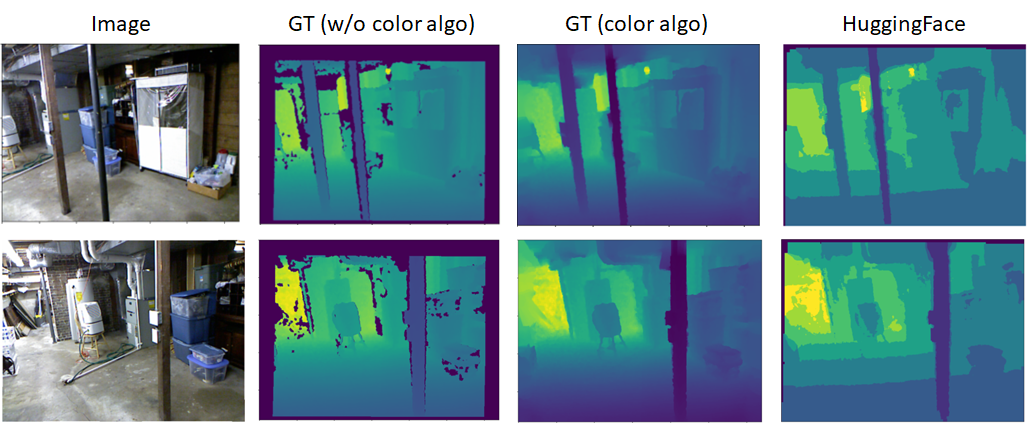

} | This PR will fix the issue mentioned in #5461.

cc: @sayakpaul @lhoestq

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5484/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5484/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5483 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5483/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5483/comments | https://api.github.com/repos/huggingface/datasets/issues/5483/events | https://github.com/huggingface/datasets/issues/5483 | 1,560,894,690 | I_kwDODunzps5dCVzi | 5,483 | Unable to upload dataset | {

"login": "yuvalkirstain",

"id": 57996478,

"node_id": "MDQ6VXNlcjU3OTk2NDc4",

"avatar_url": "https://avatars.githubusercontent.com/u/57996478?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/yuvalkirstain",

"html_url": "https://github.com/yuvalkirstain",

"followers_url": "https://api.github.com/users/yuvalkirstain/followers",

"following_url": "https://api.github.com/users/yuvalkirstain/following{/other_user}",

"gists_url": "https://api.github.com/users/yuvalkirstain/gists{/gist_id}",

"starred_url": "https://api.github.com/users/yuvalkirstain/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/yuvalkirstain/subscriptions",

"organizations_url": "https://api.github.com/users/yuvalkirstain/orgs",

"repos_url": "https://api.github.com/users/yuvalkirstain/repos",

"events_url": "https://api.github.com/users/yuvalkirstain/events{/privacy}",

"received_events_url": "https://api.github.com/users/yuvalkirstain/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Seems to work now, perhaps it was something internal with our university's network."

] | 2023-01-28T15:18:26 | 2023-01-29T08:09:49 | 2023-01-29T08:09:49 | NONE | null | null | null | ### Describe the bug

Uploading a simple dataset ends with an exception

### Steps to reproduce the bug

I created a new conda env with python 3.10, pip installed datasets and:

```python

>>> from datasets import load_dataset, load_from_disk, Dataset

>>> d = Dataset.from_dict({"text": ["hello"] * 2})

>>> d.push_to_hub("ttt111")

/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/utils/_hf_folder.py:92: UserWarning: A token has been found in `/a/home/cc/students/cs/kirstain/.huggingface/token`. This is the old path where tokens were stored. The new location is `/home/olab/kirstain/.cache/huggingface/token` which is configurable using `HF_HOME` environment variable. Your token has been copied to this new location. You can now safely delete the old token file manually or use `huggingface-cli logout`.

warnings.warn(

Creating parquet from Arrow format: 100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 1/1 [00:00<00:00, 279.94ba/s]

Upload 1 LFS files: 0%| | 0/1 [00:02<?, ?it/s]

Pushing dataset shards to the dataset hub: 0%| | 0/1 [00:04<?, ?it/s]

Traceback (most recent call last):

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/utils/_errors.py", line 264, in hf_raise_for_status

response.raise_for_status()

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/requests/models.py", line 1021, in raise_for_status

raise HTTPError(http_error_msg, response=self)

requests.exceptions.HTTPError: 403 Client Error: Forbidden for url: https://s3.us-east-1.amazonaws.com/lfs.huggingface.co/repos/cf/0c/cf0c5ab8a3f729e5f57a8b79a36ecea64a31126f13218591c27ed9a1c7bd9b41/ece885a4bb6bbc8c1bb51b45542b805283d74590f72cd4c45d3ba76628570386?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Content-Sha256=UNSIGNED-PAYLOAD&X-Amz-Credential=AKIA4N7VTDGO27GPWFUO%2F20230128%2Fus-east-1%2Fs3%2Faws4_request&X-Amz-Date=20230128T151640Z&X-Amz-Expires=900&X-Amz-Signature=89e78e9a9d70add7ed93d453334f4f93c6f29d889d46750a1f2da04af73978db&X-Amz-SignedHeaders=host&x-amz-storage-class=INTELLIGENT_TIERING&x-id=PutObject

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/_commit_api.py", line 334, in _inner_upload_lfs_object

return _upload_lfs_object(

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/_commit_api.py", line 391, in _upload_lfs_object

lfs_upload(

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/lfs.py", line 273, in lfs_upload

_upload_single_part(

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/lfs.py", line 305, in _upload_single_part

hf_raise_for_status(upload_res)

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/utils/_errors.py", line 318, in hf_raise_for_status

raise HfHubHTTPError(str(e), response=response) from e

huggingface_hub.utils._errors.HfHubHTTPError: 403 Client Error: Forbidden for url: https://s3.us-east-1.amazonaws.com/lfs.huggingface.co/repos/cf/0c/cf0c5ab8a3f729e5f57a8b79a36ecea64a31126f13218591c27ed9a1c7bd9b41/ece885a4bb6bbc8c1bb51b45542b805283d74590f72cd4c45d3ba76628570386?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Content-Sha256=UNSIGNED-PAYLOAD&X-Amz-Credential=AKIA4N7VTDGO27GPWFUO%2F20230128%2Fus-east-1%2Fs3%2Faws4_request&X-Amz-Date=20230128T151640Z&X-Amz-Expires=900&X-Amz-Signature=89e78e9a9d70add7ed93d453334f4f93c6f29d889d46750a1f2da04af73978db&X-Amz-SignedHeaders=host&x-amz-storage-class=INTELLIGENT_TIERING&x-id=PutObject

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/datasets/arrow_dataset.py", line 4909, in push_to_hub

repo_id, split, uploaded_size, dataset_nbytes, repo_files, deleted_size = self._push_parquet_shards_to_hub(

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/datasets/arrow_dataset.py", line 4804, in _push_parquet_shards_to_hub

_retry(

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/datasets/utils/file_utils.py", line 281, in _retry

return func(*func_args, **func_kwargs)

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/utils/_validators.py", line 124, in _inner_fn

return fn(*args, **kwargs)

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/hf_api.py", line 2537, in upload_file

commit_info = self.create_commit(

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/utils/_validators.py", line 124, in _inner_fn

return fn(*args, **kwargs)

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/hf_api.py", line 2346, in create_commit

upload_lfs_files(

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/utils/_validators.py", line 124, in _inner_fn

return fn(*args, **kwargs)

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/_commit_api.py", line 346, in upload_lfs_files

thread_map(

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/tqdm/contrib/concurrent.py", line 94, in thread_map

return _executor_map(ThreadPoolExecutor, fn, *iterables, **tqdm_kwargs)

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/tqdm/contrib/concurrent.py", line 76, in _executor_map

return list(tqdm_class(ex.map(fn, *iterables, **map_args), **kwargs))

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/tqdm/std.py", line 1195, in __iter__

for obj in iterable:

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/concurrent/futures/_base.py", line 621, in result_iterator

yield _result_or_cancel(fs.pop())

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/concurrent/futures/_base.py", line 319, in _result_or_cancel

return fut.result(timeout)

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/concurrent/futures/_base.py", line 458, in result

return self.__get_result()

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/concurrent/futures/_base.py", line 403, in __get_result

raise self._exception

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/concurrent/futures/thread.py", line 58, in run

result = self.fn(*self.args, **self.kwargs)

File "/home/olab/kirstain/anaconda3/envs/datasets/lib/python3.10/site-packages/huggingface_hub/_commit_api.py", line 338, in _inner_upload_lfs_object

raise RuntimeError(

RuntimeError: Error while uploading 'data/train-00000-of-00001-6df93048e66df326.parquet' to the Hub.

```

### Expected behavior

The dataset should be uploaded without any exceptions

### Environment info

- `datasets` version: 2.9.0

- Platform: Linux-4.15.0-65-generic-x86_64-with-glibc2.27

- Python version: 3.10.9

- PyArrow version: 11.0.0

- Pandas version: 1.5.3

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5483/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5483/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5482 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5482/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5482/comments | https://api.github.com/repos/huggingface/datasets/issues/5482/events | https://github.com/huggingface/datasets/issues/5482 | 1,560,853,137 | I_kwDODunzps5dCLqR | 5,482 | Reload features from Parquet metadata | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

},

{

"id": 3761482852,

"node_id": "LA_k... | closed | false | {

"login": "MFreidank",

"id": 6368040,

"node_id": "MDQ6VXNlcjYzNjgwNDA=",

"avatar_url": "https://avatars.githubusercontent.com/u/6368040?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/MFreidank",

"html_url": "https://github.com/MFreidank",

"followers_url": "https://api.github.com/users/MFreidank/followers",

"following_url": "https://api.github.com/users/MFreidank/following{/other_user}",

"gists_url": "https://api.github.com/users/MFreidank/gists{/gist_id}",

"starred_url": "https://api.github.com/users/MFreidank/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/MFreidank/subscriptions",

"organizations_url": "https://api.github.com/users/MFreidank/orgs",

"repos_url": "https://api.github.com/users/MFreidank/repos",

"events_url": "https://api.github.com/users/MFreidank/events{/privacy}",

"received_events_url": "https://api.github.com/users/MFreidank/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "MFreidank",

"id": 6368040,

"node_id": "MDQ6VXNlcjYzNjgwNDA=",

"avatar_url": "https://avatars.githubusercontent.com/u/6368040?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/MFreidank",

"html_url": "https://github.com/MFreidank",

"followers_url": "https://api... | null | [

"I'd be happy to have a look, if nobody else has started working on this yet @lhoestq. \r\n\r\nIt seems to me that for the `arrow` format features are currently attached as metadata [in `datasets.arrow_writer`](https://github.com/huggingface/datasets/blob/5f810b7011a8a4ab077a1847c024d2d9e267b065/src/datasets/arrow_... | 2023-01-28T13:12:31 | 2023-02-12T15:57:02 | 2023-02-12T15:57:02 | MEMBER | null | null | null | The idea would be to allow this :

```python

ds.to_parquet("my_dataset/ds.parquet")

reloaded = load_dataset("my_dataset")

assert ds.features == reloaded.features

```

And it should also work with Image and Audio types (right now they're reloaded as a dict type)

This can be implemented by storing and reading the feature types in the parquet metadata, as we do for arrow files. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5482/reactions",

"total_count": 1,

"+1": 1,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5482/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5481 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5481/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5481/comments | https://api.github.com/repos/huggingface/datasets/issues/5481/events | https://github.com/huggingface/datasets/issues/5481 | 1,560,468,195 | I_kwDODunzps5dAtrj | 5,481 | Load a cached dataset as iterable | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

},

{

"id": 3761482852,

"node_id": "LA_k... | open | false | null | [] | null | [

"Can I work on this issue? I am pretty new to this.",

"Hi ! Sure :) you can comment `#self-assign` to assign yourself to this issue.\r\n\r\nI can give you some pointers to get started:\r\n\r\n`load_dataset` works roughly this way:\r\n1. it instantiate a dataset builder using `load_dataset_builder()`\r\n2. the bui... | 2023-01-27T21:43:51 | 2023-05-15T19:28:11 | null | MEMBER | null | null | null | The idea would be to allow something like

```python

ds = load_dataset("c4", "en", as_iterable=True)

```

To be used to train models. It would load an IterableDataset from the cached Arrow files.

Cc @stas00

Edit : from the discussions we may load from cache when streaming=True | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5481/reactions",

"total_count": 3,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 3,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5481/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5480 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5480/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5480/comments | https://api.github.com/repos/huggingface/datasets/issues/5480/events | https://github.com/huggingface/datasets/pull/5480 | 1,560,364,866 | PR_kwDODunzps5ItY2y | 5,480 | Select columns of Dataset or DatasetDict | {

"login": "daskol",

"id": 9336514,

"node_id": "MDQ6VXNlcjkzMzY1MTQ=",

"avatar_url": "https://avatars.githubusercontent.com/u/9336514?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/daskol",

"html_url": "https://github.com/daskol",

"followers_url": "https://api.github.com/users/daskol/followers",

"following_url": "https://api.github.com/users/daskol/following{/other_user}",

"gists_url": "https://api.github.com/users/daskol/gists{/gist_id}",

"starred_url": "https://api.github.com/users/daskol/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/daskol/subscriptions",

"organizations_url": "https://api.github.com/users/daskol/orgs",

"repos_url": "https://api.github.com/users/daskol/repos",

"events_url": "https://api.github.com/users/daskol/events{/privacy}",

"received_events_url": "https://api.github.com/users/daskol/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... | 2023-01-27T20:06:16 | 2023-02-13T11:10:13 | 2023-02-13T09:59:35 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5480",

"html_url": "https://github.com/huggingface/datasets/pull/5480",

"diff_url": "https://github.com/huggingface/datasets/pull/5480.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5480.patch",

"merged_at": "2023-02-13T09:59:35"

} | Close #5474 and #5468. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5480/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5480/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5479 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5479/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5479/comments | https://api.github.com/repos/huggingface/datasets/issues/5479/events | https://github.com/huggingface/datasets/issues/5479 | 1,560,357,590 | I_kwDODunzps5dASrW | 5,479 | audiofolder works on local env, but creates empty dataset in a remote one, what dependencies could I be missing/outdated | {

"login": "jcho19",

"id": 107211437,

"node_id": "U_kgDOBmPqrQ",

"avatar_url": "https://avatars.githubusercontent.com/u/107211437?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/jcho19",

"html_url": "https://github.com/jcho19",

"followers_url": "https://api.github.com/users/jcho19/followers",

"following_url": "https://api.github.com/users/jcho19/following{/other_user}",

"gists_url": "https://api.github.com/users/jcho19/gists{/gist_id}",

"starred_url": "https://api.github.com/users/jcho19/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jcho19/subscriptions",

"organizations_url": "https://api.github.com/users/jcho19/orgs",

"repos_url": "https://api.github.com/users/jcho19/repos",

"events_url": "https://api.github.com/users/jcho19/events{/privacy}",

"received_events_url": "https://api.github.com/users/jcho19/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 2023-01-27T20:01:22 | 2023-01-29T05:23:14 | 2023-01-29T05:23:14 | NONE | null | null | null | ### Describe the bug

I'm using a custom audio dataset (400+ audio files) in the correct format for audiofolder. Although loading the dataset with audiofolder works in one local setup, it doesn't in a remote one (it just creates an empty dataset). I have both ffmpeg and libndfile installed on both computers, what could be missing/need to be updated in the one that doesn't work? On the remote env, libsndfile is 1.0.28 and ffmpeg is 4.2.1.

from datasets import load_dataset

ds = load_dataset("audiofolder", data_dir="...")

Here is the output (should be generating 400+ rows):

Downloading and preparing dataset audiofolder/default to ...

Downloading data files: 0%| | 0/2 [00:00<?, ?it/s]

Downloading data files: 0it [00:00, ?it/s]

Extracting data files: 0it [00:00, ?it/s]

Generating train split: 0 examples [00:00, ? examples/s]

Dataset audiofolder downloaded and prepared to ... Subsequent calls will reuse this data.

0%| | 0/1 [00:00<?, ?it/s]

DatasetDict({

train: Dataset({

features: ['audio', 'transcription'],

num_rows: 1

})

})

Here is my pip environment in the one that doesn't work (uses torch 1.11.a0 from shared env):

Package Version

------------------- -------------------

aiofiles 22.1.0

aiohttp 3.8.3

aiosignal 1.3.1

altair 4.2.1

anyio 3.6.2

appdirs 1.4.4

argcomplete 2.0.0

argon2-cffi 20.1.0

astunparse 1.6.3

async-timeout 4.0.2

attrs 21.2.0

audioread 3.0.0

backcall 0.2.0

bleach 4.0.0

certifi 2021.10.8

cffi 1.14.6

charset-normalizer 2.0.12

click 8.1.3

contourpy 1.0.7

cycler 0.11.0

datasets 2.9.0

debugpy 1.4.1

decorator 5.0.9

defusedxml 0.7.1

dill 0.3.6

distlib 0.3.4

entrypoints 0.3

evaluate 0.4.0

expecttest 0.1.3

fastapi 0.89.1

ffmpy 0.3.0

filelock 3.6.0

fonttools 4.38.0

frozenlist 1.3.3

fsspec 2023.1.0

future 0.18.2

gradio 3.16.2

h11 0.14.0

httpcore 0.16.3

httpx 0.23.3

huggingface-hub 0.12.0

idna 3.3

ipykernel 6.2.0

ipython 7.26.0

ipython-genutils 0.2.0

ipywidgets 7.6.3

jedi 0.18.0

Jinja2 3.0.1

jiwer 2.5.1

joblib 1.2.0

jsonschema 3.2.0

jupyter 1.0.0

jupyter-client 6.1.12

jupyter-console 6.4.0

jupyter-core 4.7.1

jupyterlab-pygments 0.1.2

jupyterlab-widgets 1.0.0

kiwisolver 1.4.4

Levenshtein 0.20.2

librosa 0.9.2

linkify-it-py 1.0.3

llvmlite 0.39.1

markdown-it-py 2.1.0

MarkupSafe 2.0.1

matplotlib 3.6.3

matplotlib-inline 0.1.2

mdit-py-plugins 0.3.3

mdurl 0.1.2

mistune 0.8.4

multidict 6.0.4

multiprocess 0.70.14

nbclient 0.5.4

nbconvert 6.1.0

nbformat 5.1.3

nest-asyncio 1.5.1

notebook 6.4.3

numba 0.56.4

numpy 1.20.3

orjson 3.8.5

packaging 21.0

pandas 1.5.3

pandocfilters 1.4.3

parso 0.8.2

pexpect 4.8.0

pickleshare 0.7.5

Pillow 9.4.0

pip 22.3.1

pipx 1.1.0

platformdirs 2.5.2

pooch 1.6.0

prometheus-client 0.11.0

prompt-toolkit 3.0.19

psutil 5.9.0

ptyprocess 0.7.0

pyarrow 10.0.1

pycparser 2.20

pycryptodome 3.16.0

pydantic 1.10.4

pydub 0.25.1

Pygments 2.10.0

pyparsing 2.4.7

pyrsistent 0.18.0

python-dateutil 2.8.2

python-multipart 0.0.5

pytz 2022.7.1

PyYAML 6.0

pyzmq 22.2.1

qtconsole 5.1.1

QtPy 1.10.0

rapidfuzz 2.13.7

regex 2022.10.31

requests 2.27.1

resampy 0.4.2

responses 0.18.0

rfc3986 1.5.0

scikit-learn 1.2.1

scipy 1.6.3

Send2Trash 1.8.0

setuptools 65.5.1

shiboken6 6.3.1

shiboken6-generator 6.3.1

six 1.16.0

sniffio 1.3.0

soundfile 0.11.0

starlette 0.22.0

terminado 0.11.0

testpath 0.5.0

threadpoolctl 3.1.0

tokenizers 0.13.2

toolz 0.12.0

torch 1.11.0a0+gitunknown

tornado 6.1

tqdm 4.64.1

traitlets 5.0.5

transformers 4.27.0.dev0

types-dataclasses 0.6.4

typing_extensions 4.1.1

uc-micro-py 1.0.1

urllib3 1.26.9

userpath 1.8.0

uvicorn 0.20.0

virtualenv 20.14.1

wcwidth 0.2.5

webencodings 0.5.1

websockets 10.4

wheel 0.37.1

widgetsnbextension 3.5.1

xxhash 3.2.0

yarl 1.8.2

### Steps to reproduce the bug

Create a pip environment with the packages listed above (make sure ffmpeg and libsndfile is installed with same versions listed above).

Create a custom audio dataset and load it in with load_dataset("audiofolder", ...)

### Expected behavior

load_dataset should create a dataset with 400+ rows.

### Environment info

- `datasets` version: 2.9.0

- Platform: Linux-3.10.0-1160.80.1.el7.x86_64-x86_64-with-glibc2.17

- Python version: 3.9.0

- PyArrow version: 10.0.1

- Pandas version: 1.5.3 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5479/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5479/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5478 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5478/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5478/comments | https://api.github.com/repos/huggingface/datasets/issues/5478/events | https://github.com/huggingface/datasets/pull/5478 | 1,560,357,583 | PR_kwDODunzps5ItXQG | 5,478 | Tip for recomputing metadata | {

"login": "stevhliu",

"id": 59462357,

"node_id": "MDQ6VXNlcjU5NDYyMzU3",

"avatar_url": "https://avatars.githubusercontent.com/u/59462357?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/stevhliu",

"html_url": "https://github.com/stevhliu",

"followers_url": "https://api.github.com/users/stevhliu/followers",

"following_url": "https://api.github.com/users/stevhliu/following{/other_user}",

"gists_url": "https://api.github.com/users/stevhliu/gists{/gist_id}",

"starred_url": "https://api.github.com/users/stevhliu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/stevhliu/subscriptions",

"organizations_url": "https://api.github.com/users/stevhliu/orgs",

"repos_url": "https://api.github.com/users/stevhliu/repos",

"events_url": "https://api.github.com/users/stevhliu/events{/privacy}",

"received_events_url": "https://api.github.com/users/stevhliu/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... | 2023-01-27T20:01:22 | 2023-01-30T19:22:21 | 2023-01-30T19:15:26 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5478",

"html_url": "https://github.com/huggingface/datasets/pull/5478",

"diff_url": "https://github.com/huggingface/datasets/pull/5478.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5478.patch",

"merged_at": "2023-01-30T19:15:26"

} | From this [feedback](https://discuss.huggingface.co/t/nonmatchingsplitssizeserror/30033) on the forum, thought I'd include a tip for recomputing the metadata numbers if it is your own dataset. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5478/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5478/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5477 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5477/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5477/comments | https://api.github.com/repos/huggingface/datasets/issues/5477/events | https://github.com/huggingface/datasets/issues/5477 | 1,559,909,892 | I_kwDODunzps5c-lYE | 5,477 | Unpin sqlalchemy once issue is fixed | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"@albertvillanova It looks like that issue has been fixed so I made a PR to unpin sqlalchemy! ",

"The source issue:\r\n- https://github.com/pandas-dev/pandas/issues/40686\r\n\r\nhas been fixed:\r\n- https://github.com/pandas-dev/pandas/pull/48576\r\n\r\nThe fix was released yesterday (2023-04-03) only in `pandas-... | 2023-01-27T15:01:55 | 2023-04-04T08:06:43 | null | MEMBER | null | null | null | Once the source issue is fixed:

- pandas-dev/pandas#51015

we should revert the pin introduced in:

- #5476 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5477/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5477/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5476 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5476/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5476/comments | https://api.github.com/repos/huggingface/datasets/issues/5476/events | https://github.com/huggingface/datasets/pull/5476 | 1,559,594,684 | PR_kwDODunzps5IqwC_ | 5,476 | Pin sqlalchemy | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... | 2023-01-27T11:26:38 | 2023-01-27T12:06:51 | 2023-01-27T11:57:48 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5476",

"html_url": "https://github.com/huggingface/datasets/pull/5476",

"diff_url": "https://github.com/huggingface/datasets/pull/5476.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5476.patch",

"merged_at": "2023-01-27T11:57:48"

} | since sqlalchemy update to 2.0.0 the CI started to fail: https://github.com/huggingface/datasets/actions/runs/4023742457/jobs/6914976514

the error comes from pandas: https://github.com/pandas-dev/pandas/issues/51015 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5476/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5476/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5475 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5475/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5475/comments | https://api.github.com/repos/huggingface/datasets/issues/5475/events | https://github.com/huggingface/datasets/issues/5475 | 1,559,030,149 | I_kwDODunzps5c7OmF | 5,475 | Dataset scan time is much slower than using native arrow | {

"login": "jonny-cyberhaven",

"id": 121845112,

"node_id": "U_kgDOB0M1eA",

"avatar_url": "https://avatars.githubusercontent.com/u/121845112?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/jonny-cyberhaven",

"html_url": "https://github.com/jonny-cyberhaven",

"followers_url": "https://api.github.com/users/jonny-cyberhaven/followers",

"following_url": "https://api.github.com/users/jonny-cyberhaven/following{/other_user}",

"gists_url": "https://api.github.com/users/jonny-cyberhaven/gists{/gist_id}",

"starred_url": "https://api.github.com/users/jonny-cyberhaven/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jonny-cyberhaven/subscriptions",

"organizations_url": "https://api.github.com/users/jonny-cyberhaven/orgs",

"repos_url": "https://api.github.com/users/jonny-cyberhaven/repos",

"events_url": "https://api.github.com/users/jonny-cyberhaven/events{/privacy}",

"received_events_url": "https://api.github.com/users/jonny-cyberhaven/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Hi ! In your code you only iterate on the Arrow buffers - you don't actually load the data as python objects. For a fair comparison, you can modify your code using:\r\n```diff\r\n- for _ in range(0, len(table), bsz):\r\n- _ = {k:table[k][_ : _ + bsz] for k in cols}\r\n+ for _ in range(0, len(table)... | 2023-01-27T01:32:25 | 2023-01-30T16:17:11 | 2023-01-30T16:17:11 | CONTRIBUTOR | null | null | null | ### Describe the bug

I'm basically running the same scanning experiment from the tutorials https://huggingface.co/course/chapter5/4?fw=pt except now I'm comparing to a native pyarrow version.

I'm finding that the native pyarrow approach is much faster (2 orders of magnitude). Is there something I'm missing that explains this phenomenon?

### Steps to reproduce the bug

https://colab.research.google.com/drive/11EtHDaGAf1DKCpvYnAPJUW-LFfAcDzHY?usp=sharing

### Expected behavior

I expect scan times to be on par with using pyarrow directly.

### Environment info

standard colab environment | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5475/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5475/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5474 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5474/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5474/comments | https://api.github.com/repos/huggingface/datasets/issues/5474/events | https://github.com/huggingface/datasets/issues/5474 | 1,558,827,155 | I_kwDODunzps5c6dCT | 5,474 | Column project operation on `datasets.Dataset` | {

"login": "daskol",

"id": 9336514,

"node_id": "MDQ6VXNlcjkzMzY1MTQ=",

"avatar_url": "https://avatars.githubusercontent.com/u/9336514?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/daskol",

"html_url": "https://github.com/daskol",

"followers_url": "https://api.github.com/users/daskol/followers",

"following_url": "https://api.github.com/users/daskol/following{/other_user}",

"gists_url": "https://api.github.com/users/daskol/gists{/gist_id}",

"starred_url": "https://api.github.com/users/daskol/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/daskol/subscriptions",

"organizations_url": "https://api.github.com/users/daskol/orgs",

"repos_url": "https://api.github.com/users/daskol/repos",

"events_url": "https://api.github.com/users/daskol/events{/privacy}",

"received_events_url": "https://api.github.com/users/daskol/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892865,

"node_id": "MDU6TGFiZWwxOTM1ODkyODY1",

"url": "https://api.github.com/repos/huggingface/datasets/labels/duplicate",

"name": "duplicate",

"color": "cfd3d7",

"default": true,

"description": "This issue or pull request already exists"

},

{

"id": 1935892871,

"... | closed | false | null | [] | null | [

"Hi ! This would be a nice addition indeed :) This sounds like a duplicate of https://github.com/huggingface/datasets/issues/5468\r\n\r\n> Not sure. Some of my PRs are still open and some do not have any discussions.\r\n\r\nSorry to hear that, feel free to ping me on those PRs"

] | 2023-01-26T21:47:53 | 2023-02-13T09:59:37 | 2023-02-13T09:59:37 | CONTRIBUTOR | null | null | null | ### Feature request

There is no operation to select a subset of columns of original dataset. Expected API follows.

```python

a = Dataset.from_dict({

'int': [0, 1, 2]

'char': ['a', 'b', 'c'],

'none': [None] * 3,

})

b = a.project('int', 'char') # usually, .select()

print(a.column_names) # stdout: ['int', 'char', 'none']

print(b.column_names) # stdout: ['int', 'char']

```

Method project can easily accept not only column names (as a `str)` but univariant function applied to corresponding column as an example. Or keyword arguments can be used in order to rename columns in advance (see `pandas`, `pyspark`, `pyarrow`, and SQL)..

### Motivation

Projection is a typical operation in every data processing library. And it is a basic block of a well-known data manipulation language like SQL. Without this operation `datasets.Dataset` interface is not complete.

### Your contribution

Not sure. Some of my PRs are still open and some do not have any discussions. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5474/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5474/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5473 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5473/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5473/comments | https://api.github.com/repos/huggingface/datasets/issues/5473/events | https://github.com/huggingface/datasets/pull/5473 | 1,558,668,197 | PR_kwDODunzps5Inm9h | 5,473 | Set dev version | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... | 2023-01-26T19:34:44 | 2023-01-26T19:47:34 | 2023-01-26T19:38:30 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5473",

"html_url": "https://github.com/huggingface/datasets/pull/5473",

"diff_url": "https://github.com/huggingface/datasets/pull/5473.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5473.patch",

"merged_at": "2023-01-26T19:38:30"

} | null | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5473/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5473/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5472 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5472/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5472/comments | https://api.github.com/repos/huggingface/datasets/issues/5472/events | https://github.com/huggingface/datasets/pull/5472 | 1,558,662,251 | PR_kwDODunzps5Inlp8 | 5,472 | Release: 2.9.0 | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... | 2023-01-26T19:29:42 | 2023-01-26T19:40:44 | 2023-01-26T19:33:00 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5472",

"html_url": "https://github.com/huggingface/datasets/pull/5472",

"diff_url": "https://github.com/huggingface/datasets/pull/5472.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5472.patch",

"merged_at": "2023-01-26T19:33:00"

} | null | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5472/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5472/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5471 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5471/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5471/comments | https://api.github.com/repos/huggingface/datasets/issues/5471/events | https://github.com/huggingface/datasets/pull/5471 | 1,558,557,545 | PR_kwDODunzps5InPA7 | 5,471 | Add num_test_batches option | {

"login": "amyeroberts",

"id": 22614925,

"node_id": "MDQ6VXNlcjIyNjE0OTI1",

"avatar_url": "https://avatars.githubusercontent.com/u/22614925?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/amyeroberts",

"html_url": "https://github.com/amyeroberts",

"followers_url": "https://api.github.com/users/amyeroberts/followers",

"following_url": "https://api.github.com/users/amyeroberts/following{/other_user}",

"gists_url": "https://api.github.com/users/amyeroberts/gists{/gist_id}",

"starred_url": "https://api.github.com/users/amyeroberts/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/amyeroberts/subscriptions",

"organizations_url": "https://api.github.com/users/amyeroberts/orgs",

"repos_url": "https://api.github.com/users/amyeroberts/repos",

"events_url": "https://api.github.com/users/amyeroberts/events{/privacy}",

"received_events_url": "https://api.github.com/users/amyeroberts/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"I thought this issue was resolved in my parallel `to_tf_dataset` PR! I changed the default `num_test_batches` in `_get_output_signature` to 20 and used a test batch size of 1 to maximize variance to detect shorter samples. I think it... | 2023-01-26T18:09:40 | 2023-01-27T18:16:45 | 2023-01-27T18:08:36 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5471",

"html_url": "https://github.com/huggingface/datasets/pull/5471",

"diff_url": "https://github.com/huggingface/datasets/pull/5471.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5471.patch",

"merged_at": "2023-01-27T18:08:36"

} | `to_tf_dataset` calls can be very costly because of the number of test batches drawn during `_get_output_signature`. The test batches are draw in order to estimate the shapes when creating the tensorflow dataset. This is necessary when the shapes can be irregular, but not in cases when the tensor shapes are the same across all samples. This PR adds an option to change the number of batches drawn, so the user can speed this conversion up.

Running the following, and modifying `num_test_batches`

```

import time

from datasets import load_dataset

from transformers import DefaultDataCollator

data_collator = DefaultDataCollator()

dataset = load_dataset("beans")

dataset = dataset["train"].with_format("np")

start = time.time()

dataset = dataset.to_tf_dataset(

columns=["image"],

label_cols=["label"],

batch_size=8,

collate_fn=data_collator,

num_test_batches=NUM_TEST_BATCHES,

)

end = time.time()

print(end - start)

```

NUM_TEST_BATCHES=200: 0.8197s

NUM_TEST_BATCHES=50: 0.3070s

NUM_TEST_BATCHES=2: 0.1417s

NUM_TEST_BATCHES=1: 0.1352s | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5471/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5471/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5470 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5470/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5470/comments | https://api.github.com/repos/huggingface/datasets/issues/5470/events | https://github.com/huggingface/datasets/pull/5470 | 1,558,542,611 | PR_kwDODunzps5InLw9 | 5,470 | Update dataset card creation | {

"login": "stevhliu",

"id": 59462357,

"node_id": "MDQ6VXNlcjU5NDYyMzU3",

"avatar_url": "https://avatars.githubusercontent.com/u/59462357?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/stevhliu",

"html_url": "https://github.com/stevhliu",

"followers_url": "https://api.github.com/users/stevhliu/followers",

"following_url": "https://api.github.com/users/stevhliu/following{/other_user}",

"gists_url": "https://api.github.com/users/stevhliu/gists{/gist_id}",

"starred_url": "https://api.github.com/users/stevhliu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/stevhliu/subscriptions",

"organizations_url": "https://api.github.com/users/stevhliu/orgs",

"repos_url": "https://api.github.com/users/stevhliu/repos",

"events_url": "https://api.github.com/users/stevhliu/events{/privacy}",

"received_events_url": "https://api.github.com/users/stevhliu/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"The CI failure is unrelated to your PR - feel free to merge :)",

"Haha thanks, you read my mind :)",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n##... | 2023-01-26T17:57:51 | 2023-01-27T16:27:00 | 2023-01-27T16:20:10 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5470",

"html_url": "https://github.com/huggingface/datasets/pull/5470",

"diff_url": "https://github.com/huggingface/datasets/pull/5470.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5470.patch",

"merged_at": "2023-01-27T16:20:10"

} | Encourages users to create a dataset card on the Hub directly with the new metadata ui + import dataset card template instead of telling users to manually create and upload one. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5470/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5470/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5469 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5469/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5469/comments | https://api.github.com/repos/huggingface/datasets/issues/5469/events | https://github.com/huggingface/datasets/pull/5469 | 1,558,346,906 | PR_kwDODunzps5Imhk2 | 5,469 | Remove deprecated `shard_size` arg from `.push_to_hub()` | {

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... | 2023-01-26T15:40:56 | 2023-01-26T17:37:51 | 2023-01-26T17:30:59 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5469",

"html_url": "https://github.com/huggingface/datasets/pull/5469",

"diff_url": "https://github.com/huggingface/datasets/pull/5469.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5469.patch",

"merged_at": "2023-01-26T17:30:59"

} | The docstrings say that it was supposed to be deprecated since version 2.4.0, can we remove it? | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5469/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5469/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5468 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5468/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5468/comments | https://api.github.com/repos/huggingface/datasets/issues/5468/events | https://github.com/huggingface/datasets/issues/5468 | 1,558,066,625 | I_kwDODunzps5c3jXB | 5,468 | Allow opposite of remove_columns on Dataset and DatasetDict | {

"login": "hollance",

"id": 346853,

"node_id": "MDQ6VXNlcjM0Njg1Mw==",

"avatar_url": "https://avatars.githubusercontent.com/u/346853?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/hollance",

"html_url": "https://github.com/hollance",

"followers_url": "https://api.github.com/users/hollance/followers",

"following_url": "https://api.github.com/users/hollance/following{/other_user}",

"gists_url": "https://api.github.com/users/hollance/gists{/gist_id}",

"starred_url": "https://api.github.com/users/hollance/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/hollance/subscriptions",

"organizations_url": "https://api.github.com/users/hollance/orgs",

"repos_url": "https://api.github.com/users/hollance/repos",

"events_url": "https://api.github.com/users/hollance/events{/privacy}",

"received_events_url": "https://api.github.com/users/hollance/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

},

{

"id": 1935892877,

"node_id": "MDU6... | closed | false | null | [] | null | [

"Hi! I agree it would be nice to have a method like that. Instead of `keep_columns`, we can name it `select_columns` to be more aligned with PyArrow's naming convention (`pa.Table.select`).",

"Hi, I am a newbie to open source and would like to contribute. @mariosasko can I take up this issue ?",

"Hey, I also wa... | 2023-01-26T12:28:09 | 2023-02-13T09:59:38 | 2023-02-13T09:59:38 | NONE | null | null | null | ### Feature request

In this blog post https://huggingface.co/blog/audio-datasets, I noticed the following code:

```python

COLUMNS_TO_KEEP = ["text", "audio"]

all_columns = gigaspeech["train"].column_names

columns_to_remove = set(all_columns) - set(COLUMNS_TO_KEEP)

gigaspeech = gigaspeech.remove_columns(columns_to_remove)

```

This kind of thing happens a lot when you don't need to keep all columns from the dataset. It would be more convenient (and less error prone) if you could just write:

```python

gigaspeech = gigaspeech.keep_columns(["text", "audio"])

```

Internally, `keep_columns` could still call `remove_columns`, but it expresses more clearly what the user's intent is.

### Motivation

Less code to write for the user of the dataset.

### Your contribution

- | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5468/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5468/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5467 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5467/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5467/comments | https://api.github.com/repos/huggingface/datasets/issues/5467/events | https://github.com/huggingface/datasets/pull/5467 | 1,557,898,273 | PR_kwDODunzps5IlAlk | 5,467 | Fix conda command in readme | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"ah didn't read well - it's all good",

"or maybe it isn't ? `-c huggingface -c conda-forge` installs from HF or from conda-forge ?",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | ... | 2023-01-26T10:03:01 | 2023-01-26T18:32:16 | 2023-01-26T18:29:37 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5467",

"html_url": "https://github.com/huggingface/datasets/pull/5467",

"diff_url": "https://github.com/huggingface/datasets/pull/5467.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5467.patch",

"merged_at": null

} | The [conda forge channel](https://anaconda.org/conda-forge/datasets) is lagging behind (as of right now, only 2.7.1 is available), we should recommend using the [Hugging face channel](https://anaconda.org/HuggingFace/datasets) that we are maintaining

```

conda install -c huggingface datasets

``` | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5467/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5467/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5466 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5466/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5466/comments | https://api.github.com/repos/huggingface/datasets/issues/5466/events | https://github.com/huggingface/datasets/pull/5466 | 1,557,584,845 | PR_kwDODunzps5Ij-z1 | 5,466 | remove pathlib.Path with URIs | {

"login": "jonny-cyberhaven",

"id": 121845112,

"node_id": "U_kgDOB0M1eA",

"avatar_url": "https://avatars.githubusercontent.com/u/121845112?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/jonny-cyberhaven",

"html_url": "https://github.com/jonny-cyberhaven",

"followers_url": "https://api.github.com/users/jonny-cyberhaven/followers",

"following_url": "https://api.github.com/users/jonny-cyberhaven/following{/other_user}",

"gists_url": "https://api.github.com/users/jonny-cyberhaven/gists{/gist_id}",

"starred_url": "https://api.github.com/users/jonny-cyberhaven/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jonny-cyberhaven/subscriptions",

"organizations_url": "https://api.github.com/users/jonny-cyberhaven/orgs",

"repos_url": "https://api.github.com/users/jonny-cyberhaven/repos",

"events_url": "https://api.github.com/users/jonny-cyberhaven/events{/privacy}",

"received_events_url": "https://api.github.com/users/jonny-cyberhaven/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Thanks !\r\n`os.path.join` will use a backslash `\\` on windows which will also fail. You can use this instead in `load_from_disk`:\r\n```python\r\nfrom .filesystems import is_remote_filesystem\r\n\r\nis_local = not is_remote_filesystem(fs)\r\npath_join = os.path.join if is_local else posixpath.join\r\n```",

"Th... | 2023-01-26T03:25:45 | 2023-01-26T17:08:57 | 2023-01-26T16:59:11 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5466",

"html_url": "https://github.com/huggingface/datasets/pull/5466",

"diff_url": "https://github.com/huggingface/datasets/pull/5466.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5466.patch",

"merged_at": "2023-01-26T16:59:11"

} | Pathlib will convert "//" to "/" which causes retry errors when downloading from cloud storage | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5466/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5466/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5465 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5465/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5465/comments | https://api.github.com/repos/huggingface/datasets/issues/5465/events | https://github.com/huggingface/datasets/issues/5465 | 1,557,510,618 | I_kwDODunzps5c1bna | 5,465 | audiofolder creates empty dataset even though the dataset passed in follows the correct structure | {

"login": "jcho19",

"id": 107211437,

"node_id": "U_kgDOBmPqrQ",

"avatar_url": "https://avatars.githubusercontent.com/u/107211437?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/jcho19",

"html_url": "https://github.com/jcho19",

"followers_url": "https://api.github.com/users/jcho19/followers",

"following_url": "https://api.github.com/users/jcho19/following{/other_user}",

"gists_url": "https://api.github.com/users/jcho19/gists{/gist_id}",

"starred_url": "https://api.github.com/users/jcho19/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jcho19/subscriptions",

"organizations_url": "https://api.github.com/users/jcho19/orgs",

"repos_url": "https://api.github.com/users/jcho19/repos",

"events_url": "https://api.github.com/users/jcho19/events{/privacy}",

"received_events_url": "https://api.github.com/users/jcho19/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 2023-01-26T01:45:45 | 2023-01-26T08:48:45 | 2023-01-26T08:48:45 | NONE | null | null | null | ### Describe the bug

The structure of my dataset folder called "my_dataset" is : data metadata.csv

The data folder consists of all mp3 files and metadata.csv consist of file locations like 'data/...mp3 and transcriptions. There's 400+ mp3 files and corresponding transcriptions for my dataset.

When I run the following:

ds = load_dataset("audiofolder", data_dir="my_dataset")

I get:

Using custom data configuration default-...

Downloading and preparing dataset audiofolder/default to /...

Downloading data files: 0%| | 0/2 [00:00<?, ?it/s]

Downloading data files: 0it [00:00, ?it/s]

Extracting data files: 0it [00:00, ?it/s]

Generating train split: 0 examples [00:00, ? examples/s]

Dataset audiofolder downloaded and prepared to /.... Subsequent calls will reuse this data.

0%| | 0/1 [00:00<?, ?it/s]

DatasetDict({

train: Dataset({

features: ['audio', 'transcription'],

num_rows: 1

})

})

### Steps to reproduce the bug

Create a dataset folder called 'my_dataset' with a subfolder called 'data' that has mp3 files. Also, create metadata.csv that has file locations like 'data/...mp3' and their corresponding transcription.

Run:

ds = load_dataset("audiofolder", data_dir="my_dataset")

### Expected behavior

It should generate a dataset with numerous rows.

### Environment info

Run on Jupyter notebook | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5465/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5465/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5464 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5464/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5464/comments | https://api.github.com/repos/huggingface/datasets/issues/5464/events | https://github.com/huggingface/datasets/issues/5464 | 1,557,462,104 | I_kwDODunzps5c1PxY | 5,464 | NonMatchingChecksumError for hendrycks_test | {

"login": "sarahwie",

"id": 8027676,

"node_id": "MDQ6VXNlcjgwMjc2NzY=",

"avatar_url": "https://avatars.githubusercontent.com/u/8027676?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/sarahwie",

"html_url": "https://github.com/sarahwie",

"followers_url": "https://api.github.com/users/sarahwie/followers",

"following_url": "https://api.github.com/users/sarahwie/following{/other_user}",

"gists_url": "https://api.github.com/users/sarahwie/gists{/gist_id}",

"starred_url": "https://api.github.com/users/sarahwie/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/sarahwie/subscriptions",

"organizations_url": "https://api.github.com/users/sarahwie/orgs",

"repos_url": "https://api.github.com/users/sarahwie/repos",

"events_url": "https://api.github.com/users/sarahwie/events{/privacy}",

"received_events_url": "https://api.github.com/users/sarahwie/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Thanks for reporting, @sarahwie.\r\n\r\nPlease note this issue was already fixed in `datasets` 2.6.0 version:\r\n- #5040\r\n\r\nIf you update your `datasets` version, you will be able to load the dataset:\r\n```\r\npip install -U datasets\r\n```",

"Oops, missed that I needed to upgrade. Thanks!"

] | 2023-01-26T00:43:23 | 2023-01-27T05:44:31 | 2023-01-26T07:41:58 | NONE | null | null | null | ### Describe the bug

The checksum of the file has likely changed on the remote host.

### Steps to reproduce the bug

`dataset = nlp.load_dataset("hendrycks_test", "anatomy")`

### Expected behavior

no error thrown

### Environment info

- `datasets` version: 2.2.1

- Platform: macOS-13.1-arm64-arm-64bit

- Python version: 3.9.13

- PyArrow version: 9.0.0

- Pandas version: 1.5.1 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5464/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5464/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/5463 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5463/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5463/comments | https://api.github.com/repos/huggingface/datasets/issues/5463/events | https://github.com/huggingface/datasets/pull/5463 | 1,557,021,041 | PR_kwDODunzps5IiGWb | 5,463 | Imagefolder docs: mention support of CSV and ZIP | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... | 2023-01-25T17:24:01 | 2023-01-25T18:33:35 | 2023-01-25T18:26:15 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5463",

"html_url": "https://github.com/huggingface/datasets/pull/5463",

"diff_url": "https://github.com/huggingface/datasets/pull/5463.diff",